Weaponized AI and Deepfakes: Why Human Risk is Today's Biggest Business Risk

76% of organizations are facing deepfake attacks. Discover how weaponized AI is industrializing social engineering and what NIS2 requires from Senior Management.

76% of organizations in the UK have already been targeted by deepfake attacks, according to TechRadar. This figure is not a future projection, but an active operational crisis. However, the resilience gap is alarming: only 40% of companies consider themselves prepared. We are facing a 36% security deficit that reveals a "fictional readiness." In the 2026 ecosystem, traditional defense based on weekly patching has been mathematically defeated by the speed of AI. The risk is no longer a theoretical possibility; it is an ongoing incident that systematically bypasses untrained human judgment.AI as a Multiplier for Social Engineering

The concept of "Weaponized AI" has transformed psychological manipulation into an industrial-scale process. It is not a code failure, but the democratization of elite cybercrime. According to the ENISA Threat Landscape 2025 report, artificial intelligence is today the engine for more than 80% of advanced phishing campaigns, collapsing the barriers to entry for attackers with minimal resources.3 Factors that Industrialize Social Engineering:

- Subscription to Low-Cost Dark LLMs: AI models without ethical restrictions available on the black market for fees from $30 to $200, eliminating the need for advanced technical knowledge to create attacks.

- Reconnaissance Automation (OSINT): Tools that collect public data from your team in seconds, allowing for the personalization of scams on a massive scale without manual human intervention.

- Linguistic Perfection and Voice Cloning: AI eliminates grammatical errors and allows for the creation of vishing with exact voice clones of executives, nullifying the traditional warning signs of identity attacks.

The Evolution of the Attack: From Phishing to Deepfake Vishing

The "CEO Fraud 2.0" is not a warning; it is the current operating standard. Attackers have moved from malicious email to hyper-realistic multi-channel impersonation. A single case in Hong Kong resulted in the loss of $25 million after a video call where all participants, except the victim, were AI-generated avatars. Verified global losses from identity fraud using deepfakes already reach $347 million, with records of up to 8,065 attempted frauds in a single financial institution.

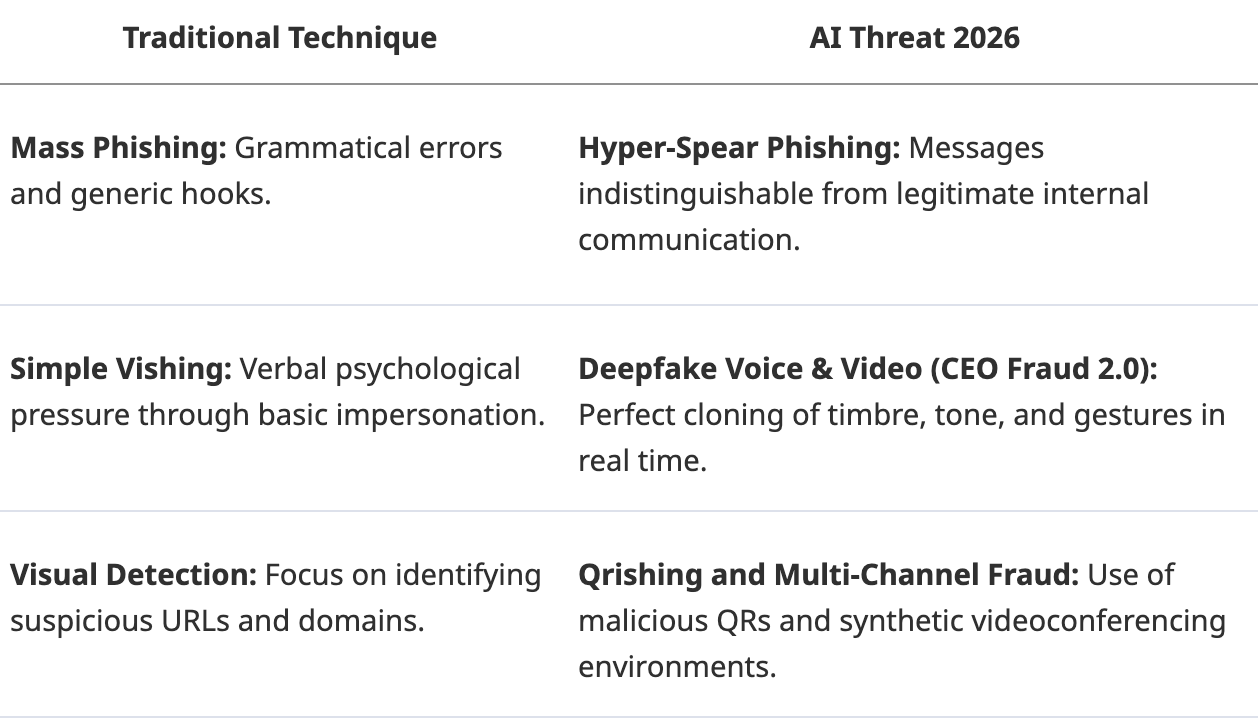

To understand the technical scope of this threat, it is essential to analyze the Definitive Guide to Vishing & Deepfake Voice. The qualitative leap is summarized in the following comparison:

Our Field Experience: Real Exposure Data

At Kymatio, through our Social Attack Simulations, we have observed a critical change in organizational behavior:

- Drop in Reporting Rate: When a vishing attack uses synthetic voice cloning, the internal reporting rate by colaborators drops up to 40% compared to traditional text phishing.

- The Speed of Doubt: The time of interaction with a malicious link generated by AI is less than 60 seconds in organizations that do not proactively manage human risk.

- The Impact of ROSI: Companies that activate their human firewalls and measure ROSI (Return of Security Investment) manage to reduce the probability of success of these industrial attacks by 80% after the first six months of continuous training.

Proactive Management vs. Passive Awareness: Activating the Human Firewall

The static learning model is dead. In an environment where the attacker is capable of weaponizing a patch in 72 hours, organizations cannot rely on awareness based on annual content. Human Risk Management (HRM) emerges as the only strategic response to transform vulnerability into a measurable resilience metric, allowing the CISO to speak the language of risk and return (ROSI) demanded by the Board of Directors.

Kymatio activates "Human Firewalls" through Social Attack Simulations (phishing, vishing, deepfakes) that operate under a principle of reality:

- ROSI Measurement: Quantification of the return on security investment by drastically reducing the probability of attack success.

- High-Fidelity Simulation: Dynamic training that prepares your team to detect manipulation under pressure, something no static course can achieve.

- Data-Driven Security Culture: Precise identification of vulnerable groups before weaponized AI exploits their behavioral gaps.

Executive Responsibility and the Legal Framework (NIS2/DORA)

Cybersecurity has ceased to be an IT problem to become a direct legal responsibility for the C-Suite. Under the NIS2 directive, the Board of Directors must demonstrably approve, supervise, and finance risk management. The lack of due diligence no longer only entails fines of up to 2% of global turnover; the real focus is personal liability.

Regulators now have the power to temporarily disqualify executives from exercising their duties in case of serious non-compliance. NIS2 demands proactive management: if a deepfake attack is successful and it is demonstrated that the management did not implement human risk control measures, the legal consequences fall on the Board. To delve into these implications, consult the C-Suite Responsibility in NIS2.How does NIS2 affect executives in the face of a Deepfake attack?

Under the NIS2 directive framework, Senior Management is directly responsible for risk supervision. A successful deepfake incident without evidence of proactive human risk management can lead to:

- Fines of up to €10M or 2% of turnover.

- Civil liability of the directors.

- Temporary disqualification from management functions.

Conclusion: Taking Control of the Human Factor

Preparation is not a feeling; it is a metric. In the era of hostile AI, organizational resilience is not measured by the robustness of its technological firewalls, but by the ability of its team to act as an active and coordinated defense.

It is imperative to carry out advanced simulations that identify exposure points before an industrialized attacker does. The first step is to anticipate new attack techniques, such as quishing and synthetic voice fraud, integrating the Advanced Phishing Trends 2026 into the core of your business strategy. Only a dynamic Security Culture will allow your organization to maintain operability in a market where human risk is, more than ever, business risk.

Frequently Asked Questions